From www.tomshardware.com

Nvidia literally sells tons of its H100 AI GPUs, and each consumes up to 700W of power, which is more than the average American household. Now that Nvidia is selling its new GPUs in high volumes for AI workloads, the aggregated power consumption of these GPUs is predicted to be as high as that of a major American city. Some quick back-of-the-envelope math also reveals that the GPUs will consume more power than in some small European countries.

The total power consumption of the data centers used for AI applications today is comparable to that of the nation of Cyprus, french firm Schneider Electric estimated back in October. But what about the power consumption of the most popular AI processors — Nvidia’s H100 and A100?

Paul Churnock, the Principal Electrical Engineer of Datacenter Technical Governance and Strategy at Microsoft, believes that Nvidia’s H100 GPUs will consume more power than all of the households in Phoenix, Arizona, by the end of 2024 when millions of these GPUs are deployed. However, the total power consumption will be less than larger cities, like Houston, Texas.

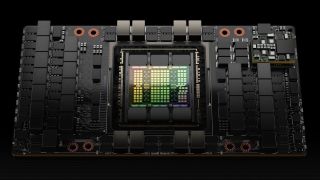

“This is Nvidia’s H100 GPU; it has a peak power consumption of 700W,” Churnock wrote in a LinkedIn post. “At a 61% annual utilization, it is equivalent to the power consumption of the average American household occupant (based on 2.51 people/household). Nvidia’s estimated sales of H100 GPUs is 1.5 – 2 million H100 GPUs in 2024. Compared to residential power consumption by city, Nvidia’s H100 chips would rank as the 5th largest, just behind Houston, Texas, and ahead of Phoenix, Arizona.”

Indeed, at 61% annual utilization, an H100 GPU would consume approximately 3,740 kilowatt-hours (kWh) of electricity annually. Assuming that Nvidia sells 1.5 million H100 GPUs in 2023 and two million H100 GPUs in 2024, there will be 3.5 million such processors deployed by late 2024. In total, they will consume a whopping 13,091,820,000 kilowatt-hours (kWh) of electricity per year, or 13,091.82 GWh.

To put the number into context, approximately 13,092 GWh is the annual power consumption of some countries, like Georgia, Lithuania, or Guatemala. While this amount of power consumption appears rather shocking, it should be noted that AI and HPC GPU efficiency is increasing. So, while Nvidia’s Blackwell-based B100 will likely outpace the power consumption of H100, it will offer higher performance and, therefore, get more work done for each unit of power consumed.

[ For more curated Computing news, check out the main news page here]

The post Nvidia’s H100 GPUs will consume more power than some countries — each GPU consumes 700W of power, 3.5 million are expected to be sold in the coming year | Tom’s Hardware first appeared on www.tomshardware.com

/cdn.vox-cdn.com/uploads/chorus_asset/file/25546355/intel_13900k_tomwarren__2_.jpg)